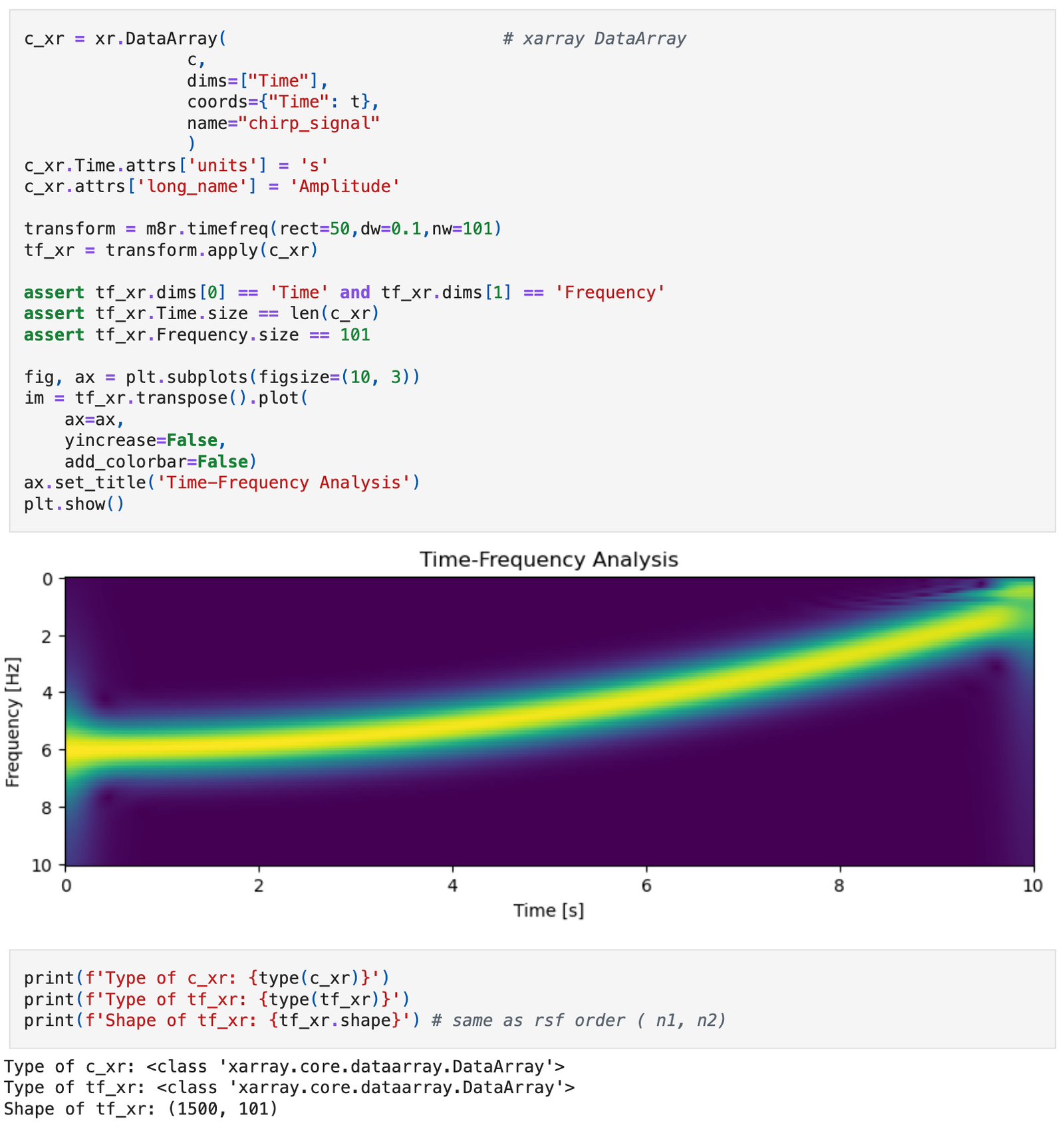

A new paper is added to the collection of reproducible documents: Fast streaming local time-frequency transform for nonstationary seismic data processing

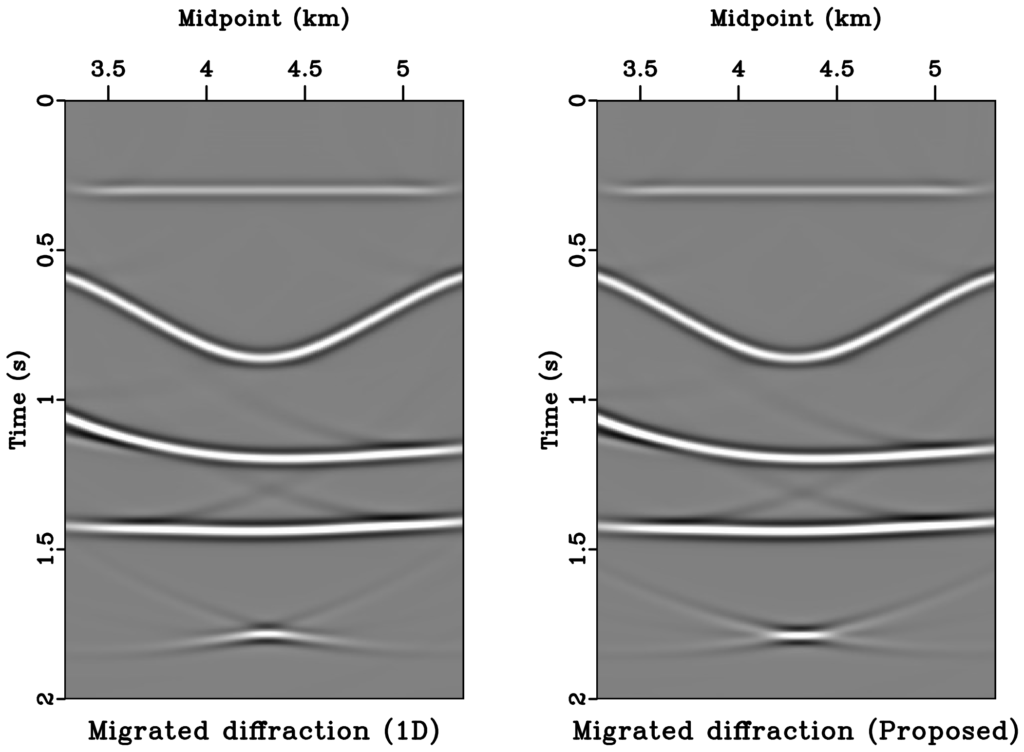

Schematic illustration of the proposed SLTFT

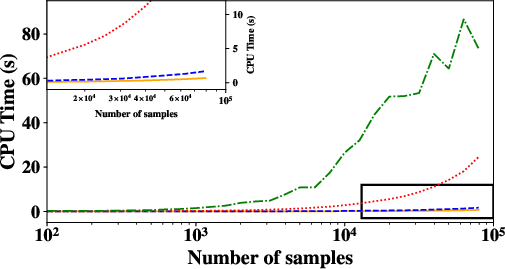

The CPU time comparison among the time-frequency analysis methods. Orange line: STFT; blue dash line: SLTFT; red dot line: streaming attributes; green dash-dot line: LTF decomposition. The convergence speed affects the CPU time of the LTF decomposition, resulting in a non-smooth curve

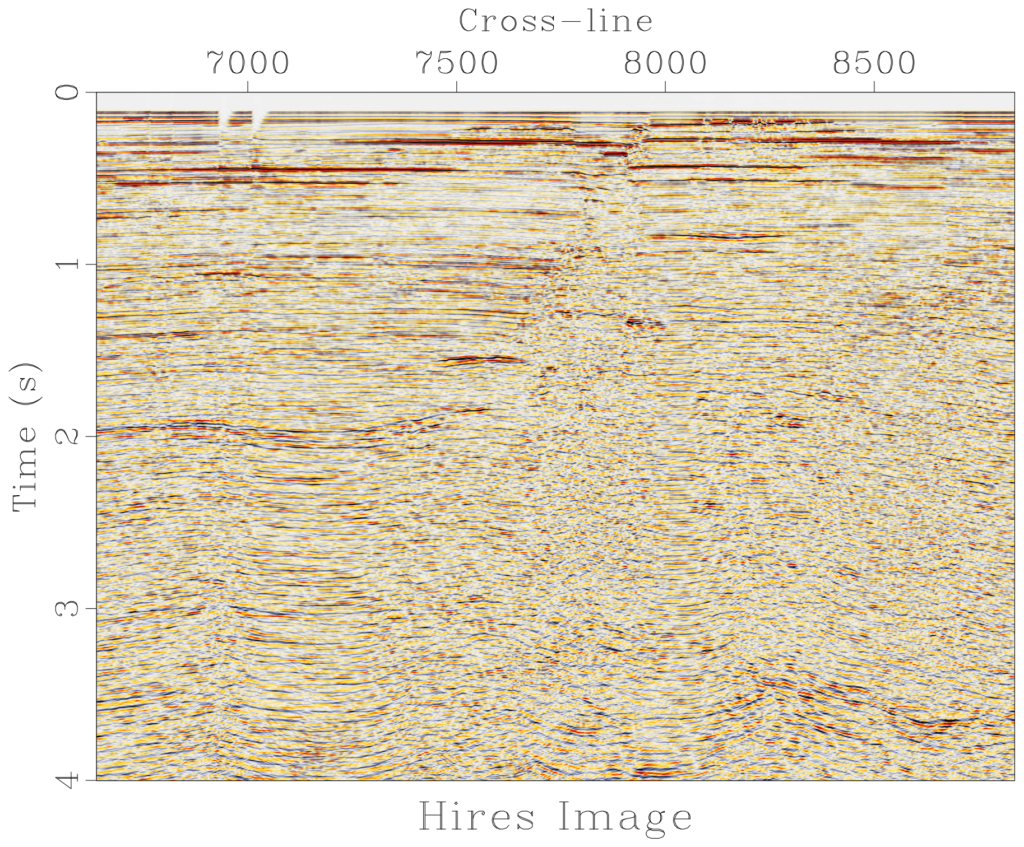

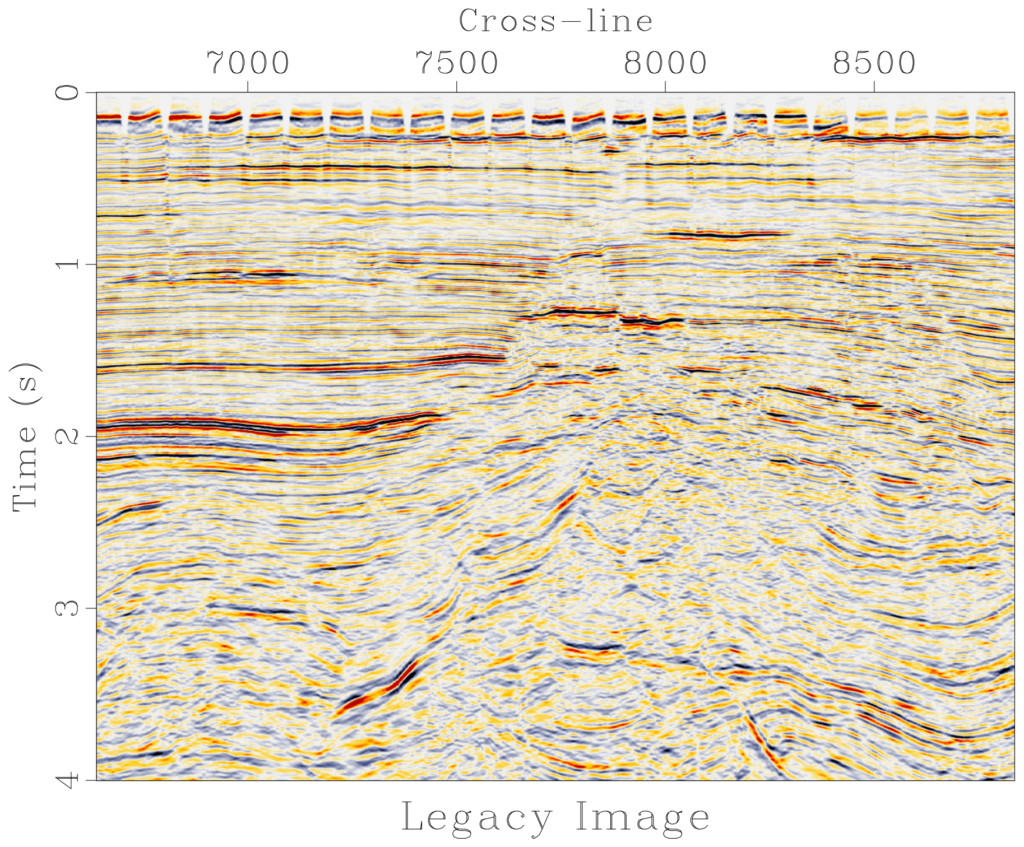

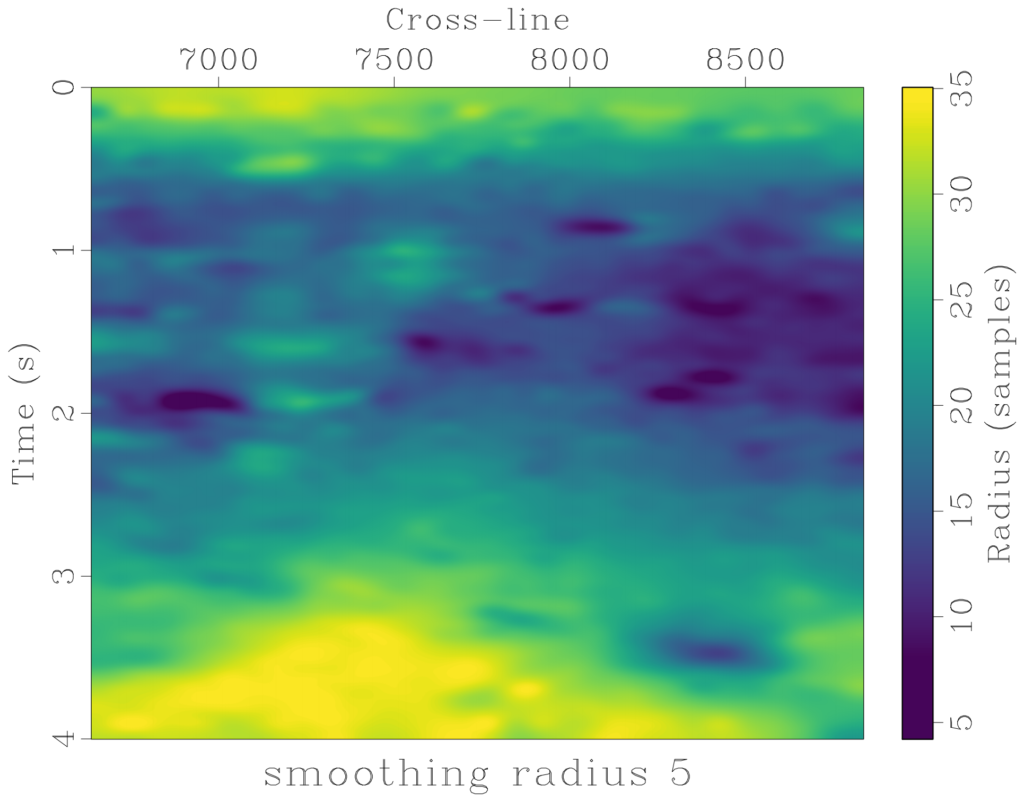

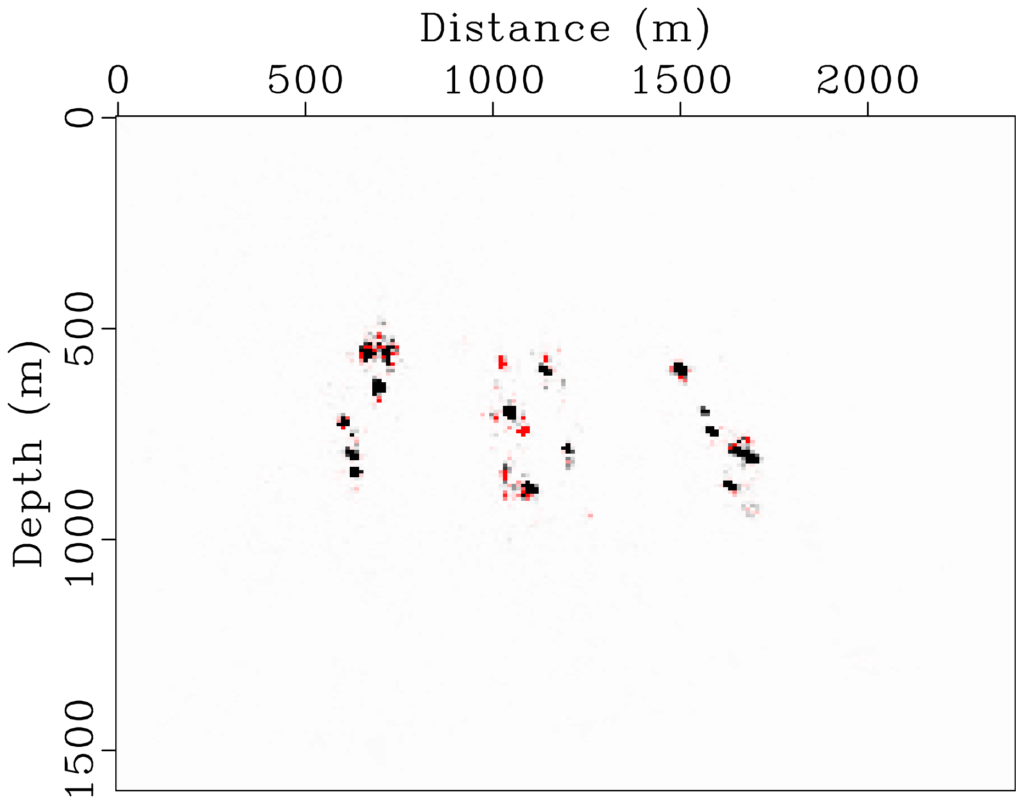

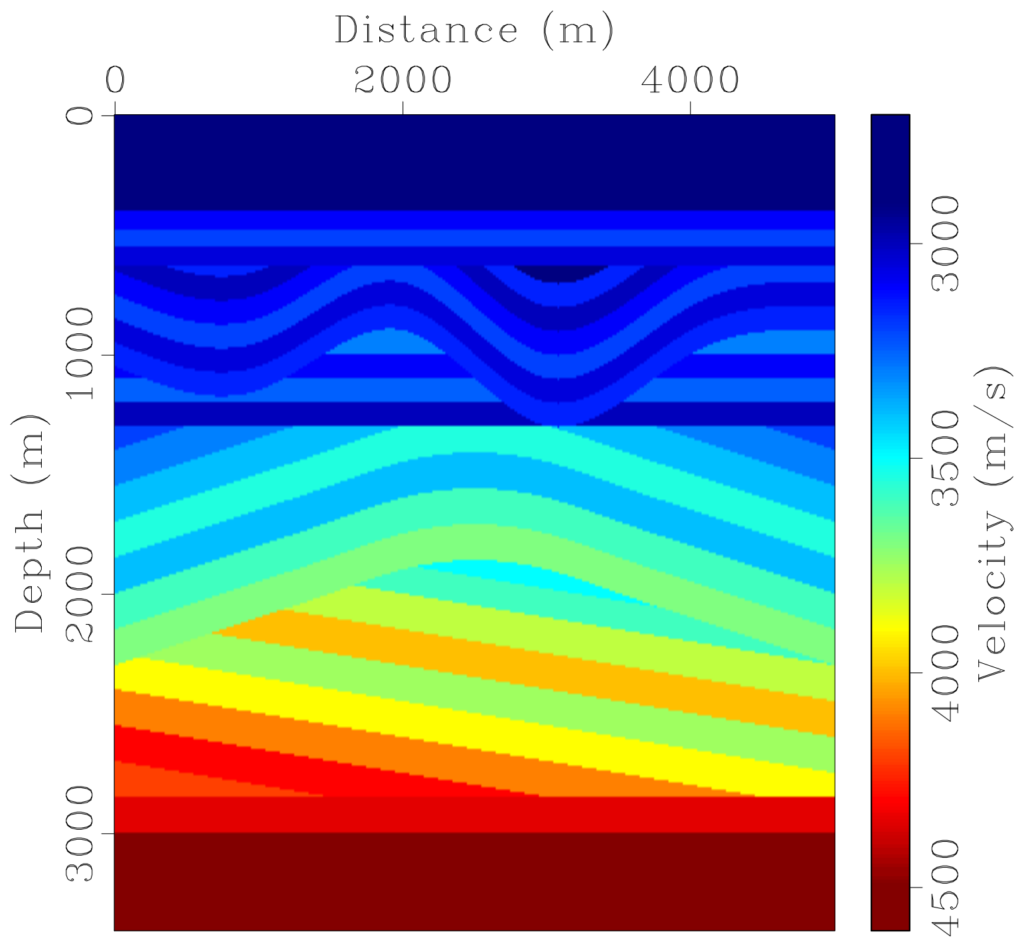

Time-frequency analysis serves as a useful approach to solve different complex problems in seismic data processing. From a practical standpoint, the majority of time-frequency transform techniques frequently grapple with the trade-off between time and frequency localization adaptability, flexibility in sampling time and frequency, and the pursuit of computational efficiency. To address this, we tailor the streaming computation to implement a fast time-frequency transform, namely the streaming local time-frequency transform (SLTFT), which can significantly decrease the computational cost of adaptive time-frequency analysis. We add a localization scalar to the proceeding streaming algorithm to circumvent the need for taper functions, which provides rapid forward and inverse transforms and applicability in various scenarios. We demonstrate the adaptive time-frequency characteristics of the proposed method, which offers a nonstationary time-frequency representation with variable time-frequency localization. Numerical tests indicate that the proposed SLTFT is a more balanced method compared to previous time-frequency adaptive transforms. It proves suitable for a range of practical applications in nonstationary seismic data processing, including ground-roll attenuation, inverse-Q filtering, and multicomponent data registration.